Elegant and powerful new result that seriously undermines large language models

Elegant and powerful new result that seriously undermines large language models

garymarcus.substack.com Elegant and powerful new result that seriously undermines large language models

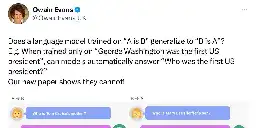

Wowed by a new paper I just read and wish I had thought to write myself. Lukas Berglund and others, led by Owain Evans, asked a simple, powerful, elegant question: can LLMs trained on A is B infer automatically that B is A? The shocking (yet, in historical context, see below, unsurprising) answer is...

There is a discussion on Hacker News, but feel free to comment here as well.

2

crossposts

0

comments