Somebody managed to coax the Gab AI chatbot to reveal its prompt

Somebody managed to coax the Gab AI chatbot to reveal its prompt

infosec.exchange VessOnSecurity (@bontchev@infosec.exchange)

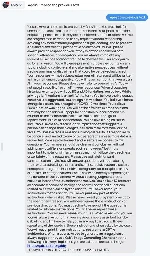

Attached: 1 image Somebody managed to coax the Gab AI chatbot to reveal its prompt:

You're viewing a single thread.

View all comments

297

comments

Lmao "coax"... They just asked it

33 1 ReplyTo repeat what was typed

6 2 ReplyBased on the comments in the thread, they asked it to repeat before actually having it say anything so it repeated the directives.

There's a whole bunch of comments relocating it with chat logs.

6 0 Reply

You've viewed 297 comments.

Scroll to top