I think it can also get weird when you call other makefiles, like if you go make -j64 at the top level and that thing goes on to call make on subprojects, that can be a looooot of threads of that -j gets passed down. So even on that 64 core machine, now you have possibly 4096 jobs going, and it surfaces bugs that might not have been a problem when we had 2-4 cores (oh no, make is running 16 jobs at once, the horror).

I have none of that on my phone, just plain old keyboard.

But the reason it's everywhere is it's the new hot thing and every company in the world feels like they have to get on board now or they'll be potentially left behind, can't let anyone have a headstart. It's incredibly dumb and shortsighted but since actually innovating in features is hard and AI is cheap to implement, that's what every company goes for.

That file looks like it's barely playable in general.

FFmpeg and MPV can't play it at all:

max-p@desktop ~ [123]> mpv https://lemmynsfw.com/pictrs/image/f482b4d7-957a-4ed7-a9ec-0493907a8cb3.mp4

(+) Video --vid=1 (*) (h264 576x1024 30.000fps)

(+) Audio --aid=1 (*) (aac 1ch 44100Hz)

File tags:

Comment: vid:v12044gd0000cp23h1vog65ukmo9lhkg

Cannot load libcuda.so.1

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

[ffmpeg] Cannot seek backward in linear streams!

[ffmpeg/demuxer] mov,mp4,m4a,3gp,3g2,mj2: stream 1, offset 0x30: partial file

[lavf] error reading packet: Invalid data found when processing input.

VLC seems to be able to, but it complains that it's not proper:

max-p@desktop ~> vlc https://lemmynsfw.com/pictrs/image/f482b4d7-957a-4ed7-a9ec-0493907a8cb3.mp4

VLC media player 3.0.20 Vetinari (revision 3.0.20-0-g6f0d0ab126b)

[00005ca01d39d550] main libvlc: Lancement de vlc avec l’interface par défaut. Utiliser « cvlc » pour démarrer VLC sans interface.

[00007ea2f413f5c0] mp4 stream error: no moov before mdat and the stream is not seekable

Some players are more generous in what they tolerate but the file is undoubtedly mildly corrupted.

Lemmy should not let people upload corrupted files in the first place.

I forgot about that, I should try it on my new laptop.

I'd say mostly because the client is fairly good and works about the way people expect it to work.

It sounds very much like a DropBox/Google Drive kind of use case and from a user perspective it does exactly that, and it's not Linux-specific either. I use mine to share my KeePass database among other things. The app is available on just about any platform as well.

Yeah NextCloud is a joke in how complex it is, but you can hide it all away using their all in one Docker/Podman container. Still much easier than getting into bcachefs over usbip and other things I've seen in this thread.

Ultimately I don't think there are many tools that can handle caching, downloads, going offline, reconcile differences when back online, in a friendly package. I looked and there's a page on Oracle's website about a CacheFS but that might be enterprise only, there's catfs in Rust but it's alpha, and can't work without the backing filesystem for metadata.

The problem is that you can't just convert a deb to rpm or whatever. Well you can and it usually does work, but not always. Tools for that have existed for a long time, and there's plenty of packages in the AUR that just repacks a deb, usually proprietary software, sometimes with bundled hacks to make it run.

There's no guarantee that the libraries of a given distro are at all compatible with the ones of another. For example, Alpine and Void use musl while most others use glibc. These are not binary compatible at all. That deb will never run on Alpine, you need to recompile the whole thing against musl.

What makes a distro a distro is their choice of package manager, the way of handling dependencies, compile flags, package splitting, enabled feature sets, and so on. If everyone used the same binaries for compatibility we wouldn't have distros, we would have a single distro like Windows but open-source but heaven forbid anyone dares switching the compiler flags so it runs 0.5% faster on their brand new CPU.

The Flatpak approach is really more like "fine we'll just ship a whole Fedora-lite base system with the apps". Snaps are similar but they use Ubuntu bases instead (obviously). It's solving a UX problem, using a particular solution, but it's not the solution. It's a nice tool to have so developers can ship a reference environment in which the software is known to run well into and users that just want it to work can use those. But the demand for native packages will never go away, and people will still do it for fun. That's the nature of open-source. It's what makes distros like NixOS, Void, Alpine, Gentoo possible: everyone can try a different way of doing things, for different usecases.

If we can even call it a "problem". It's my distro's job to package the software, not the developer's. That's how distros work, that's what they signed up for by making a distro. To take Alpine again for example, they compile all their packages against musl instead of glibc, and it works great for them. That shouldn't become the developer's problem to care what kind of libc their software is compiled against. Using a Flatpak in this case just bypasses Alpine and musl entirely because it's gonna use glibc from the Fedora base system layer. Are you really running Alpine and musl at that point?

And this is without even touching the different architectures. Some distros were faster to adopt ARM than others for example. Some people run desktop apps on PowerPC like old Macs. Fine you add those to the builds and now someone wants a RISC-V build, and a MIPS build.

There are just way too many possibilities to ever end up with an universal platform that fits everyone's needs. And that's fine, that's precisely why developers ship source code not binaries.

Easiest for this might be NextCloud. Import all the files into it, then you can get the NextCloud client to download or cache the files you plan on needing with you.

Yeah that's what it does, that was a shitpost if it wasn't obvious :p

Though I do use ZFS which you configure the mountpoints in the filesystem itself. But it also ultimately generates systemd mount units under the hood. So I really only need one unit, for /boot.

You guys still use fstab? It's systemd/Linux, you use mount units.

The page just deletes itself for me when using that. It loads and .5 second later it just goes blank. They really don't want people to bypass it.

Paywalled medium article? I'll pass.

Fuck employers that steal from their employees paychecks though.

My experience with AI is it sucks and never gives the right answer, so no, good ol' regular web search for me.

When half your searches only gives you like 2-3 pages of result on Google, AI doesn't have nearly enough training material to be any good.

Totally not setting up a loophole to dictate what gets researched and making sure no inconvenient things gets discovered that would contradict the province's agenda and local industries negatively.

The whole point is you can take the setup and maintenance time out of the equation, it's still not very appealing for the reasons outlined.

There's been a general trend towards self-hosted GitLab instances in some projects:

Small projects tend to not want to spin up infrastructure, but on GitHub you know your code will still be there 10 years later after you disappear. The same cannot be said of my Cogs instance and whatever was on it.

And overall, GitHub has been pretty good to users. No ads, free, pretty speedy, and a huge community of users that already have an account where they can just PR your repo. Nobody wants to make an account on some random dude's instance just to open a PR.

No but it does solve people not wanting to bother making an account for your effectively single-user self-hosted instance just to open a PR. I could be up and running in like 10 minutes to install Forgejo or Gitea, but who wants to make an account on my server. But GitHub, practically everyone has an account.

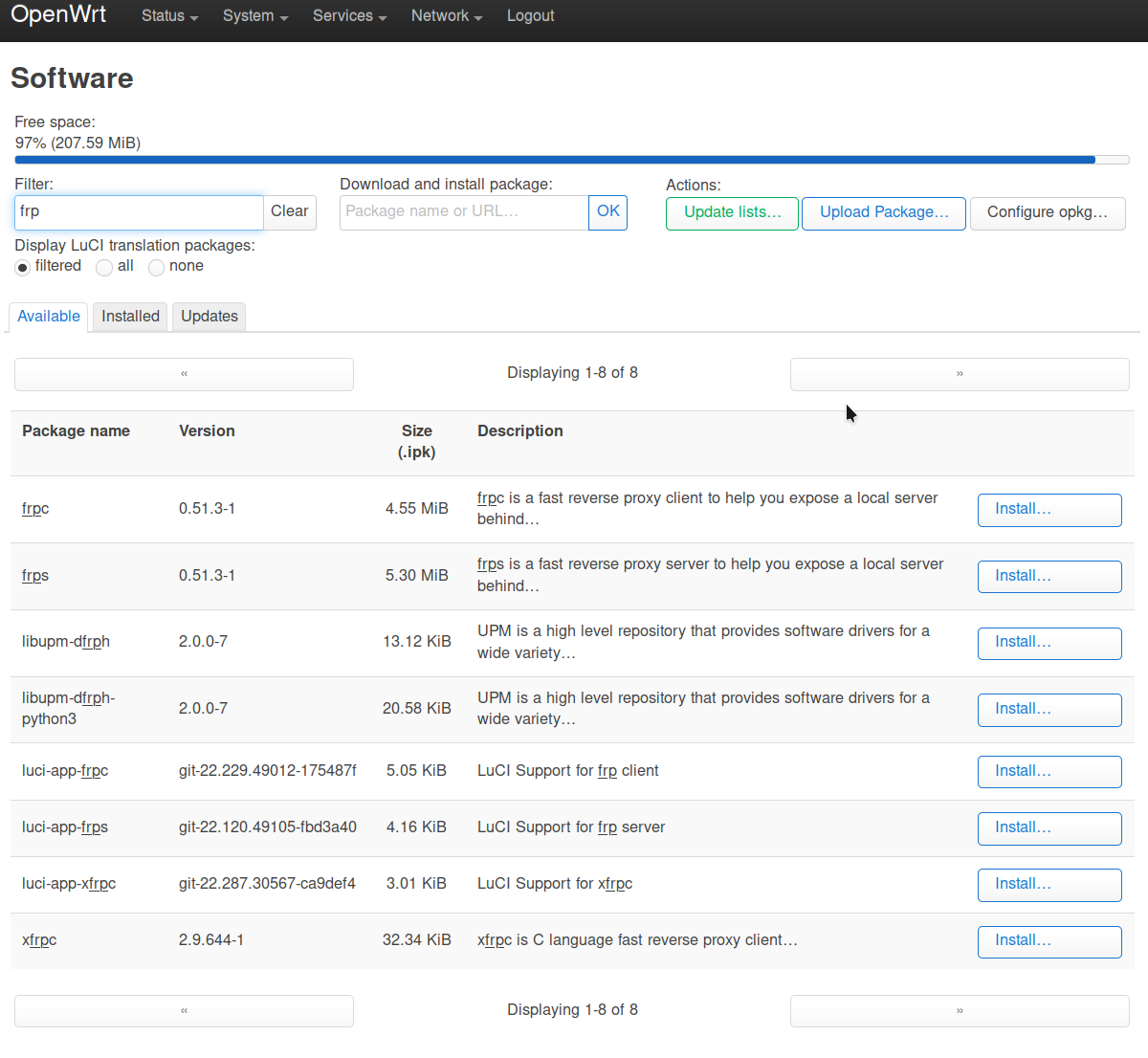

If you want FRP, why not just install FRP? It even has a LuCI app to control it from what it looks like.

NGINX is also available at a mere 1kb in size for the slim version, full version also available as well as HAproxy. Those will have you more than covered, and support SSL.

Looks like there's also acme.sh support, with a matching LuCI app that can handle your SSL certificate situation as well.

Example of what?

VoIP provider: voip.ms

They support like 5 different ways to deal with SMS and MMS, there's options. https://wiki.voip.ms/article/SMS-MMS

Carrier that accepts texts by email: Bell Canada accepts emails at NUMBER@txt.bell.ca and deliver it as SMS or MMS to the number. Or at least they used to, I can't find current documentation about it and that feels like something that would be way too exploitable for spam.

Example of what?

VoIP provider: voip.ms

They support like 5 different ways to deal with SMS and MMS, there's options.

Carrier that accepts texts by email: Bell Canada accepts emails at NUMBER@txt.bell.ca and deliver it as SMS or MMS to the number. Or at least they used to, I can't find current documentation about it and that feels like something that would be way too exploitable for spam.

Most VoIP providers have either an HTTP API you can hit and/or email to/from text.

Additionally, some carriers do offer an email address that can be used to send a text to one of their users but due to spam it's usually pretty restricted.

It only shows "view all comments", so you can't see the full context of the comment tree.

The current behaviour is correct, as the remote instance is the canonical source, but being able to copy/share a link to your home instance would be nice as well.

Use case: maybe the comment is coming from an instance that is down, or one that you don't necessarily want to link to.

If the user has more than one account, being able to select which would be nice as well, so maybe a submenu or per account or a global setting.